Phone scams have been around for a while, but recent advancements in artificial intelligence technology is making it easier for bad actors to convince people they have kidnapped their loved ones.

Scammers are using AI to replicate the voices of people’s family members in fake kidnapping schemes that convince victims to send money to the scammers in exchange for their loved ones’ safety.

The scheme recently targeted two victims in a Washington state school district.

Highline Public Schools in Burien, Washington, issued a Sept. 25 notice alerting community members that the two individuals were targeted by “scammers falsely claiming they kidnapped a family member.”

CAN AI HELP SOMEONE STAGE A FAKE KIDNAPPING SCAM AGAINST YOU OR YOUR FAMILY?

“The scammers played [AI-generated] audio recording of the family member, then demanded a ransom,” the school district wrote. “The Federal Bureau of Investigation (FBI) has noted a nationwide increase in these scams, with a particular focus on families who speak a language other than English.”

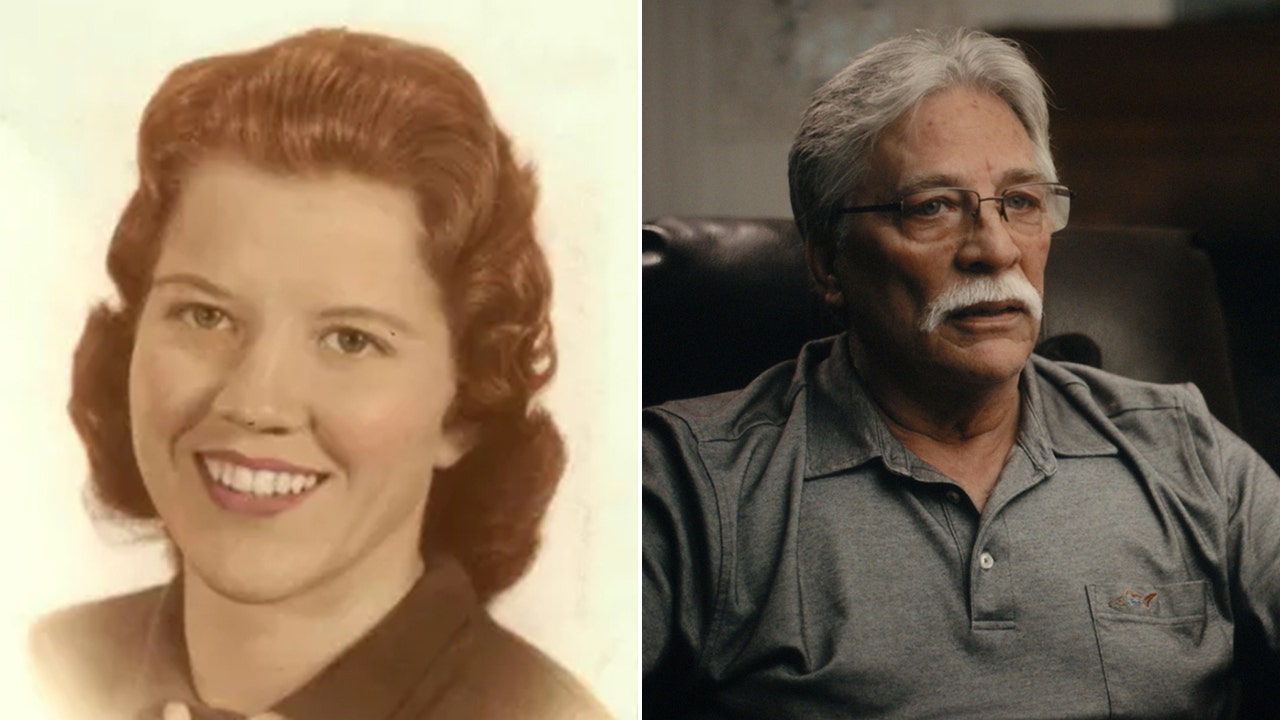

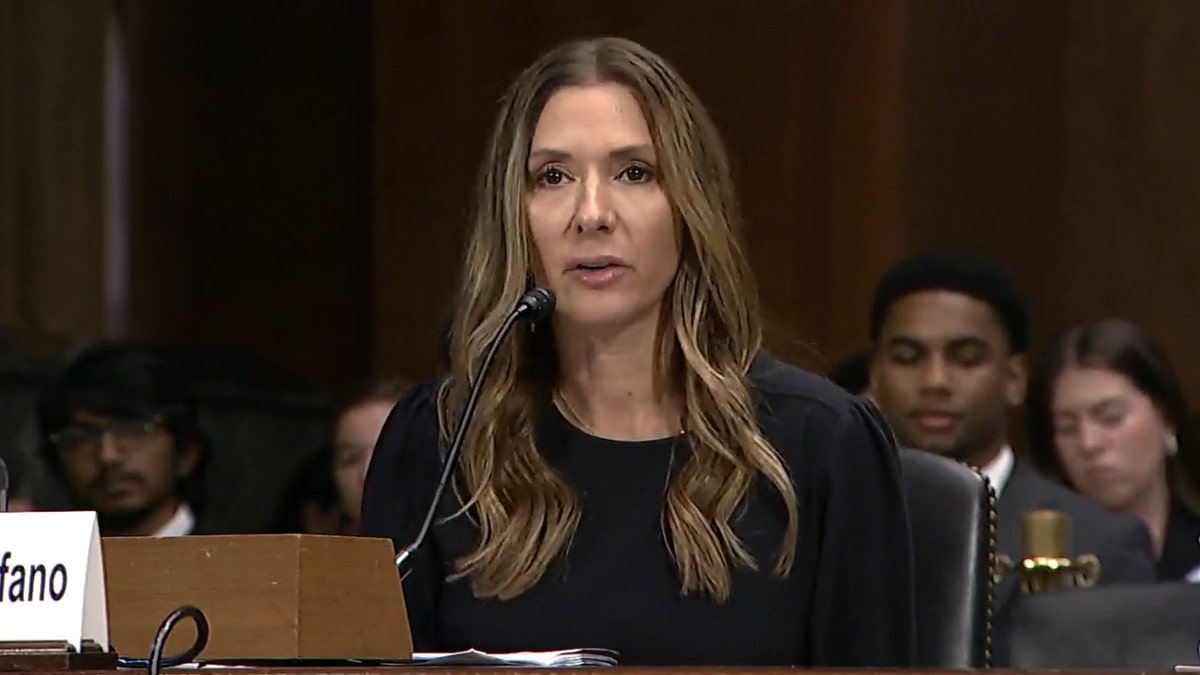

In June, Arizona mother Jennifer DeStefano testified before Congress about how scammers used AI to make her believe her daughter had been kidnapped in a $1 million extortion plot. She began by explaining her decision to answer a call from an unknown number on a Friday afternoon.

ARIZONA MOM TERRIFIED AI KIDNAPPING SCAM TRIED TO LURE HER INTO BEING ABDUCTED AS SHE FEARED FOR DAUGHTER

“I answered the phone ‘Hello.’ On the other end was our daughter Briana sobbing and crying saying, ‘Mom,’” DeStefano wrote in her congressional testimony. “At first, I thought nothing of it. … I casually asked her what happened as I had her on speaker walking through the parking lot to meet her sister. Briana continued with, ‘Mom, I messed up,’ with more crying and sobbing.”

At the time, DeStefano had no idea a bad actor had used AI to replicate her daughter’s voice on the other line.

WATCH DESTEFANO’S TESTIMONY:

She then heard a man’s voice on the other end yelling demands at her daughter, as Briana — her voice, at least — continued to scream that bad men had “taken” her.

“Briana was in the background desperately pleading, ‘Mom help me!’”

“A threatening and vulgar man took over the call. ‘Listen here, I have your daughter, you tell anyone, you call the cops, I am going to pump her stomach so full of drugs, I am going to have my way with her, drop her in [M]exico and you’ll never see her again!’ all the while Briana was in the background desperately pleading, ‘Mom, help me!’” DeStefano wrote.

The men demanded a $1 million ransom in exchange for Briana’s safety as DeStefano tried to contact friends, family and police to help her locate her daughter. When DeStefano told the scammers she did not have $1 million, they demanded $50,000 in cash to be picked up in person.

While these negotiations were going on, DeStefano’s husband located Briana safely at home in her bed with no knowledge of the scam involving her own voice.

“How could she be safe with her father and yet be in the possession of kidnappers? It was not making any sense. I had to speak to Brie,” DeStefano wrote. “I could not believe she was safe until I heard her voice say she was. I asked her over and over again if it was really her, if she was really safe, again, is this really Brie? Are you sure you are really safe?! My mind was whirling. I do not remember how many times I needed reassurance, but when I finally took hold of the fact she was safe, I was furious.”

DeStefano concluded her testimony by noting how AI is making it more difficult for humans to believe their own eyes and hear with their own ears — particularly in virtual settings or on the phone.

Bad actors have targeted victims across the United States and around the world. They are able to replicate a person’s voice through two main tactics: first, by collecting data from a person’s voice if they respond to an unknown call from scammers, who will then use AI to manipulate that same voice to say full sentences; and second, by collecting data from a person’s voice through public videos posted on social media.

“The longer you speak, the more data they’re collecting.”

That’s according to Beenu Arora, CEO and chair of Cyble, a cybersecurity company that uses AI-driven solutions to stop bad actors.

“So you are basically talking to them, assuming there’s somebody trying to have a conversation, or … a telemarketing call, for example. The intent is to get the right data through your voice, which they can further go and mimic … through some other AI model,” Arora explained. “And this is becoming a lot more prominent now.”

WATCH: CYBER KIDNAPPING VICTIM WARNS PARENTS

He added that “extracting audio from a video” on social media “is not that difficult.”

“The longer you speak, the more data they’re collecting,” Arora said of the scammers, “and also fewer voice modulations and your slang and how you talk about. But … when you come on these ghost calls or blank calls, I actually recommend to not speak a lot.”

SCAMMERS USE AI TO CLONE WOMAN’S VOICE, TERRIFY FAMILY WITH FAKE RANSOM CALL: ‘WORST DAY OF MY LIFE’

The National Institutes of Health (NIH) recommends targets of the crime beware of calls demanding ransom money from unfamiliar area codes or numbers that are not the victim’s phone. These bad actors also go to “great lengths” to keep victims on the phone so that they do not have an opportunity to contact authorities. They also frequently demand ransom money be sent via a wire transfer service.

The agency also recommends people targeted by this crime try to slow down the situation, ask to speak directly with the victim, ask for a description of the victim, listen carefully to the victim’s voice and attempt to contact the victim separately via call, text or direct message.

The NIH published a public service announcement saying multiple NIH employees have fallen victim to this type of scam, which “typically begins with a phone call saying your family member is being held captive.” Previously, scammers would allege that a victim’s family member had been kidnapped, sometimes with the sound of screaming in the background of a phone call or video message.

More recently, however, due to advancements in AI, scammers can replicate a loved one’s voice using videos on social media to make the kidnapping plot seem more real. In other words, bad actors can use AI to replicate a loved one’s voice, using publicly available videos online, to make their targets believe their family members or friends have been kidnapped.

Callers typically provide the victim with information on how to send money in exchange for the safe return of their loved one, but experts suggest victims of this crime take a moment to pause and reflect on whether what they are hearing through the other line is real, even in a moment of panic.

“My humble advice … is that when you get such kind[s] of alarming messages, or somebody is trying to push you or do things [with a sense] of urgency, it’s always better to stop and think before you go,” Arora said. “I think as a society, it’s going to become a lot more challenging of identifying real versus fake because just the advancement which we are seeing.”

Arora added that while there are some AI-centric tools being used to proactively identify and combat AI scams, because of the rapid pace of this developing technology, a long road lies ahead for those working to prevent bad actors from taking advantage of unknowing victims. Anyone who believes they have fallen victim to this kind of scam should contact their local police department.

Read the full article here